Choosing GPT or Open LLMs is no longer a side decision for product teams. It can shape your mobile app growth for years. The model you pick affects speed, cost, privacy, and user trust. It also affects how fast your team can ship AI features that users actually use.

For many companies, the goal is simple. Improve retention. Reduce support load. Increase conversions. AI can help with all three. But the “right” AI depends on your business needs, not headlines. A hosted model like GPT can help you launch fast. Open models can give you more control and lower long-run costs at scale.

GPT vs Open Source LLMs refers to the choice between hosted AI models accessed via APIs and open-source models that can be self-hosted. Hosted models offer faster setup and ease of use, while open LLMs provide more control, customization, and cost efficiency at scale.

This blog explains GPT or Open LLMs in simple business terms. You will see where each option wins, what it costs, and how to test before you commit. You will also get a rollout plan you can run in 30–60 days, without turning your roadmap upside down.

What is GPT vs Open Source LLMs?

GPT or Open LLMs means choosing between (1) hosted AI models you access by API (fast to start, less control) and (2) open source LLMs you can self-host and customize (more control, more setup).

If you want a short primer that explains the idea in everyday words, see this guide on what LLMs are and why they matter.

Two choices, one goal

No matter what you pick, the goal stays the same:

- Ship useful AI features

- Protect your brand and users

- Grow revenue and lower costs

That is the real point of GPT or Open LLMs.

Comparison table

| Feature | GPT | Open LLMs |

| Setup | Easy | Complex |

| Cost | Variable | Fixed at scale |

| Control | Low | High |

| Speed | Fast | Depends |

Why does this decision drive mobile app growth?

Mobile users are impatient. They want answers fast. They want a smooth flow. They leave when they feel stuck.

A strong business AI strategy uses AI to remove friction in key moments:

- First-time onboarding

- Searching and discovering content

- Getting help and resolving issues

- Completing a purchase or booking

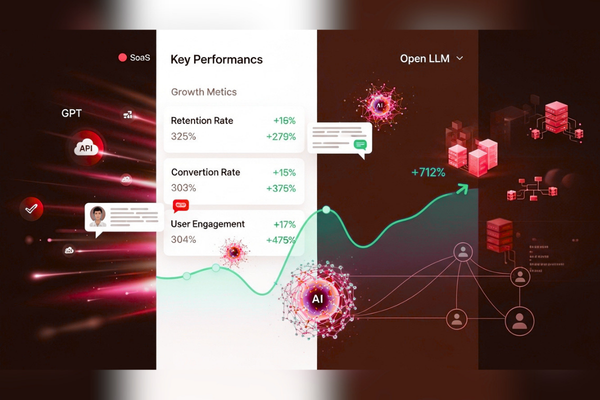

When you evaluate GPT or Open LLMs, connect the decision to clear growth metrics:

- Activation rate (users reach the value faster)

- Retention (users keep coming back)

- Conversion rate (more users buy or upgrade)

- Support cost per user (tickets go down)

For example, AI-powered support systems can reduce customer support tickets by 20–30% when implemented correctly. This is why large language models for business have become a core growth lever, not a “nice to have.”

Key Differences Between GPT and Open Source LLMs

Most teams get stuck on one question: “Which model is better?”

A better question is: “Which model helps us grow with less risk?”

Hosted GPT-style models (API-first)

Hosted models are easy to start. You send a prompt. You get an answer. You pay per use.

Pros

- Fast time to market

- Strong general writing and chat

- Less infrastructure work

- Easy scaling

Cons

- Ongoing usage costs

- Vendor dependency

- Less control over data flow

A common example is using services from OpenAI.

Open source LLMs (run it yourself)

Open models can be downloaded, hosted, and tuned. These are the core of many GPT alternatives.

Pros

- More control and customization

- More predictable costs at high volume

- Easier to keep data inside your environment

- Less vendor lock-in

Cons

- More setup and maintenance

- You own uptime and monitoring

- Quality can vary by model and task

This tradeoff is the heart of GPT or Open LLMs.

Business value and ROI (what leaders want to see)

AI should drive measurable outcomes. If you cannot measure it, it becomes a demo that never ships.

Benefits of AI automation include:

- Reduced operational costs

- Faster workflows

- Improved accuracy

- More consistent customer support

- Better user experience

Where AI often pays back fastest

For mobile apps, look for repeat work:

- Repeating support questions

- Repeating onboarding confusion

- Repeating content tagging needs

- Repeating internal team requests

When you decide on GPT or Open LLMs, start with one high-volume problem. Then expand.

Use cases: where GPT or Open LLMs fit best in mobile apps

Below are common use cases that drive real business results. Each can be built with hosted or open models. The best choice depends on your data, scale, and timeline.

In-app support that reduces tickets

Goal: reduce support volume and improve response time.

Common features:

- FAQ assistant

- Order or booking explanations

- Troubleshooting help

- Ticket routing and summaries

Best fit:

- Start hosted if you need speed

- Consider opening if you need stronger control

An onboarding coach that improves activation

Goal: help users reach value faster.

Common features:

- Guided setup Q&A

- Feature discovery tips

- “Next step” prompts

Best fit:

- Hosted often wins early for chat quality

- Open can win later with deeper product rules

Smarter search and discovery

Goal: help users find what they want quickly.

Common features:

- Semantic search

- Auto-tagging content

- Summaries for long content

Best fit:

- Open models can be cost-effective at scale

- Hosted models can be quicker to tune and deploy

Internal team copilots (ops, QA, sales)

Goal: speed up teams and reduce manual work.

Common features:

- Drafting replies and notes

- Summarizing calls

- Extracting tasks and action items

Best fit:

- Hosted is faster to start

- Open is stronger when privacy is strict

If you want a real example of building platforms that support scale, this Dropship Academy project case study shows how product execution and automation choices can support growth goals.

AI model comparison: a simple scorecard you can use

A strong AI model comparison does not need complex math. It needs fair testing against your real workflows.

Score models on business outcomes

Use a 1–5 score for each area:

- Accuracy: Is it correct for your content?

- Helpfulness: Does it solve the user’s problem?

- Consistency: Does it follow your rules?

- Speed: Does it feel fast on mobile?

- Safety: Does it avoid risky output?

- Cost per outcome: Cost per ticket solved, not per message

The “50 real prompts” test

To choose GPT or Open LLMs, run this quick test:

- Collect 50 real user questions from support

- Add 10 edge cases (refunds, angry users, policy questions)

- Add 10 onboarding questions for new users

- Test both model paths with the same inputs

- Score results and review failures

This turns opinions into data. It also makes your choice easier to defend.

How to Choose the Right AI Model

To choose between GPT vs open source LLMs:

- Start with one use case

- Test both models with real data

- Measure cost per solved outcome

- Scale the option that performs best

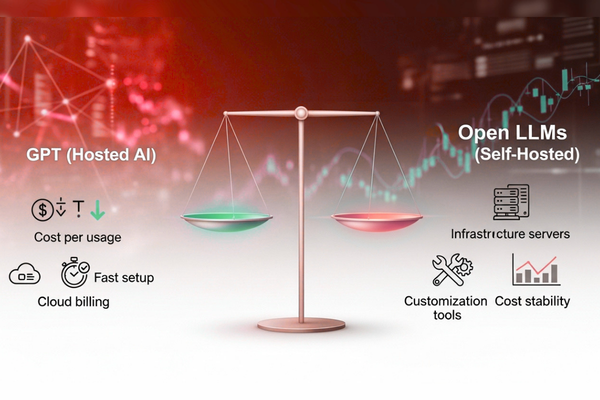

Cost Comparison: GPT vs Open Source LLMs

An honest LLM cost comparison includes more than model fees. You also pay for build time, testing, monitoring, and improvement.

Development cost ranges (planning table)

| AI Development Type | Estimated Cost |

| AI Chatbot | $10k – $50k |

| AI SaaS | $50k – $200k |

| Enterprise AI | $100k+ |

For example, a chatbot handling 100,000 monthly queries may cost significantly more with hosted APIs compared to a self-hosted model after initial setup.

What changes cost the most

When deciding GPT or Open LLMs, these cost drivers matter:

- Number of users and messages

- Response speed requirements

- Data privacy and security work

- Integration with CRM, helpdesk, or analytics

- Ongoing monitoring and human review

Hosted vs open (cost reality)

- Hosted models: lower upfront cost, higher variable usage cost

- Open models: higher upfront cost, more stable cost at scale

If you want a fast way to map scope to budget, this project estimation flow for AI builds helps you set a realistic range before you start.

AI performance benchmarks: what to measure before you scale

Most teams only test “Does it sound smart?” That is not enough. Your AI performance benchmarks should match real user experience.

Benchmarks that matter for mobile UX

Track these early:

- Latency: Does it respond fast enough?

- Completion rate: Does it finish the task?

- Escalation rate: How often does it hand off to humans?

- Hallucination rate: How often does it make things up?

- User satisfaction: A simple thumbs up/down works

Tie benchmarks to money

When choosing GPT or Open LLMs, measure “cost per solved outcome,” like:

- Cost per ticket deflected

- Cost per onboarding completion

- Cost per qualified lead

This makes the model choice a business decision, not a vibe check.

Data, privacy, and self-hosted AI models

Privacy is often the deciding factor. This is where self-hosted AI models can be a strong choice.

When privacy is a hard requirement

You may need stronger control if you handle:

- Health or financial data

- Private messages or identity data

- Enterprise client data under contract

- Strict regional storage rules

In these cases, GPT or Open LLMs often become: “How much control do we need?”

Hybrid is common (and practical)

Many teams do this:

- Use hosted models for general language tasks

- Use open models for sensitive workflows

- Route requests based on risk level

This approach reduces risk while keeping speed.

This is where self-hosted AI models become a strong alternative to GPT, especially for companies with strict compliance, security, or data residency requirements.If you want a reference point for structured delivery where trust matters, this NDMS case study on enterprise platform work shows the kind of execution discipline that supports privacy and reliability goals.

Enterprise AI solutions: picking the right stack for real teams

For larger teams, the model is only one part. You also need processes and guardrails. That is why enterprise AI solutions are about systems, not single tools.

A practical stack (simple and effective)

Most AI tools for companies fall into these buckets:

- Model access (hosted or open)

- Knowledge source (docs, FAQs, policy pages)

- Retrieval layer (to ground answers in your content)

- Monitoring and analytics (quality, cost, safety)

- Human review path (edge cases and compliance)

Keep it simple at first

When you compare GPT or Open LLMs, do not buy five tools at once.

Start with:

- One use case

- One model path

- One feedback loop

If your cloud setup is already aligned with Google, it can help to review enterprise AI platforms like Google Cloud AI options for enterprise rollout and integration planning.

GPT vs Open LLMs: When to Choose Each

Choose GPT if:

- You need to launch fast

- You lack ML infrastructure

- You want strong out-of-the-box performance

Choose Open Source LLMs if:

- You need full control over data

- You operate at high scale

- You want predictable long-term costs

Choose Hybrid if:

- You want speed + control

- You handle both sensitive and general tasks

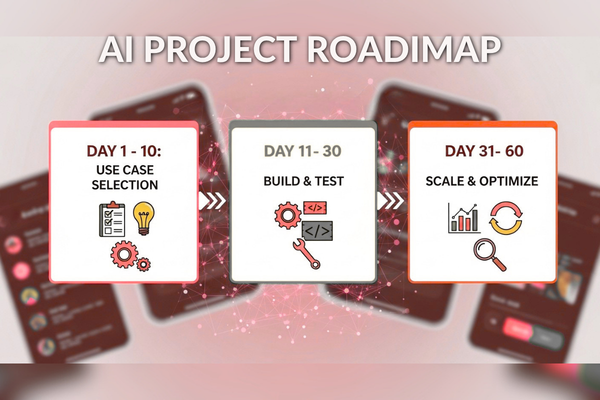

A 30–60 day rollout plan that reduces risk

You do not need a massive rewrite to get value. You need a controlled pilot.

Days 1–10 — pick one measurable use case

Good first pilots:

- Support assistant for 20–50 common questions

- Onboarding coach for the top 5 setup issues

- Internal summarizer for tickets or calls

Pick 2 metrics:

- Example: reduce tickets by 15%

- Example: improve activation by 5%

This keeps GPT or Open LLMs focused on outcomes.

Days 11–30 — build guardrails and test

Add basics that prevent brand damage:

- Clear system rules (what it can and cannot do)

- Retrieval from approved content

- Logging for failures

- Fallback messages when unsure

Days 31–60 — improve and expand

Scale only what works:

- Fix weak prompts and missing content

- Add routing (hosted vs open) if needed

- Add human review for sensitive flows

This is how large language models for business become reliable product features.

Start Your AI Development Project (CTA)

If your team is deciding between GPT or Open LLMs, our experts at Canadian Software Agency can help you design and build AI that supports real growth. We focus on practical delivery, measurable results, and safe production systems.

You can explore our AI services for mobile apps and business teams to see how we plan, build, and scale AI features.

Get a free consultation to evaluate whether GPT or open source LLMs is the right fit for your product and growth goals.

Conclusion: Choose GPT or Open LLMs based on growth goals

The best choice between GPT and Open LLMs depends on your timeline, your data risk, your traffic volume, and your need for control. Hosted GPT-style models can be the fastest path to launch and learning. Open source LLMs can be a strong long-term option when you need deeper control, stable costs at scale, and tighter privacy.

Do not guess. Run a real pilot. Test real prompts. Track real metrics. Then scale what works. When you treat GPT or Open LLMs as a growth decision, you ship faster, spend smarter, and build user trust.

FAQs

1) Are open source LLMs good enough for production apps?

Yes, often. Many open source LLMs perform well for tagging, search, summaries, and structured flows. You still need testing and monitoring.

2) What are the best GPT alternatives for business use?

The best GPT alternatives depend on your task, privacy needs, and budget. Test a few with real prompts. Use the same scorecard for each.

3) How should we run an AI model comparison without heavy tools?

Use 50–100 real prompts, score answers 1–5, measure speed, and track cost per solved outcome. That is enough to decide.

4) What is the highest hidden cost in an LLM cost comparison?

Ongoing improvement. Prompts, knowledge content, safety rules, and monitoring all need updates as your product changes.

5) When should we choose self-hosted AI models?

Choose self-hosted AI models when privacy is strict, when you need deep customization, or when high volume makes hosting more cost-effective.